> lilypad run sdxl:v0.9-lilypad1 "A record player in a pond of lilypads"

🌐 Overview

The last few weeks have been focused on the launch of Lilypad v2 and gathering feedback on Lilypad AI Studio. We are adding new modules to AI studio and are streamlining the process of submitting your own module to run on the network!

Follow along on X/Twitter for the latest news and updates!

⚒️ Lilypad Engineering Update

Baklava calibration testnet - Lilypad running on IPC!!

At DevConnect Istanbul, Lilypad launched the Baklava calibration testnet! The testnet runs on the Filecoin Calibration network, as an InterPlanetary Consensus (IPC) subnet with faster blocktimes, cheaper transactions, and more customizations.

Sign up here to contribute computing resources! The goal of the calibration testnet is to stress test core network functions like job scheduling, compute resource allocation and data routing.

Early contributions will be tracked and compute providers will become eligible for rewards as the network matures!! With just one command, anyone with a computer can join the test network.

Lilypad v2 - Aurora Testnet

We announced the launch of Lilypad v2 (Aurora testnet) at the Filecoin Dev Summit Iceland! To view currently supported modules, run a node, or create a module read the docs. Lilypad v2 supports modules including Mistral LLM, Stable Diffusion XL (SDXL 0.9), LoRA fine tuning, Filecoin Data prep, and more!

To learn more, check out this technical deep dive from Luke Marsden.

🎓 Lilypad Research Update

When a client using a verifiable compute network sends a computational task out to a node and gets the result back, how does the client know the result is correct?

At the FIL Dev Summit in Iceland, Levy Ryblov presented Lilypad's game-theoretic approach to verifiable computing.The Lilypad team is hard at work simulating various approaches to ensure robust anti-cheating mechanisms on the network!

🌟 Lilypad "All the Things" Update

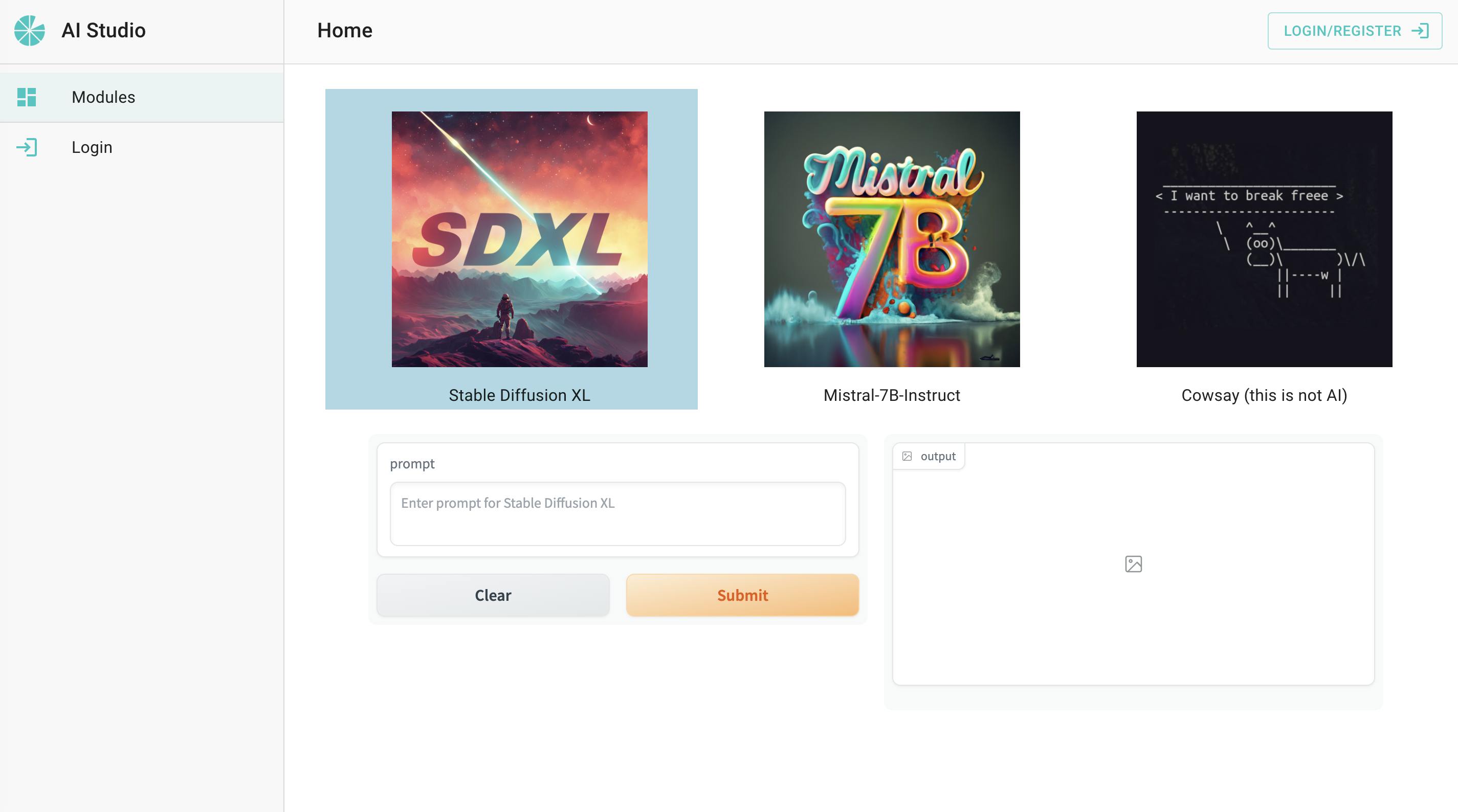

Lilypad AI Studio

Lilypad Network continues to take steps to democratize artificial intelligence with the launch of Lilypad AI Studio. Users can access leading generative models such as Stable Diffusion XL (SDXL) and Mistral-7B-Instruct through an intuitive web interface.

By building a great AI API frontend, we hope to drive demand on the network so that (a) users can simply buy easy-to-use AI compute that is lower cost due to the decentralized infrastructure it runs on and (b) node operators will have more traffic of jobs to service on the network. Read more!

Ally Haire at Smartcon Barcelona!

Check out a presentation from Lilypad founder and CEO, Ally Haire, at Smartcon 2023! She discusses developing a trustless, distributed compute network that enables internet-scale data processing, AI, ML, and other arbitrary computation!

"Lilypad offers an independent global marketplace of GPUs and compute power with global coordination for hardware. The network is accessible to everyone on the same terms and uses inbuilt cryptographic trust mechanisms that can be deployed to any EVM-compatible chain.

There is both Ethical and Engineering challenges in current AI training and models which distributed networks can help address. Lilypad provides off-chain large-scale compute with on-chain guarantees" - Ally Haire, CEO Lilypad

Lilypad Youtube

Subscribe on Youtube for awesome content and product demos! Here's a quick demo running Stable Diffusion XL (SDXL) with the Lilypad CLI.

🔮 What's Next?

More modules for Lilypad v2 and Lilypad AI studio are on the way! We are streamlining the workflow for community members to contribute modules. Contact us on slack for help troubleshooting!

Read the docs to learn more about contributing your own job module!

☎️ Contact Us

💬 Chat to us on Slack: bit.ly/bacalhau-project-slack #bacalhau-lilypad and #lilypad-general